Curator's Take

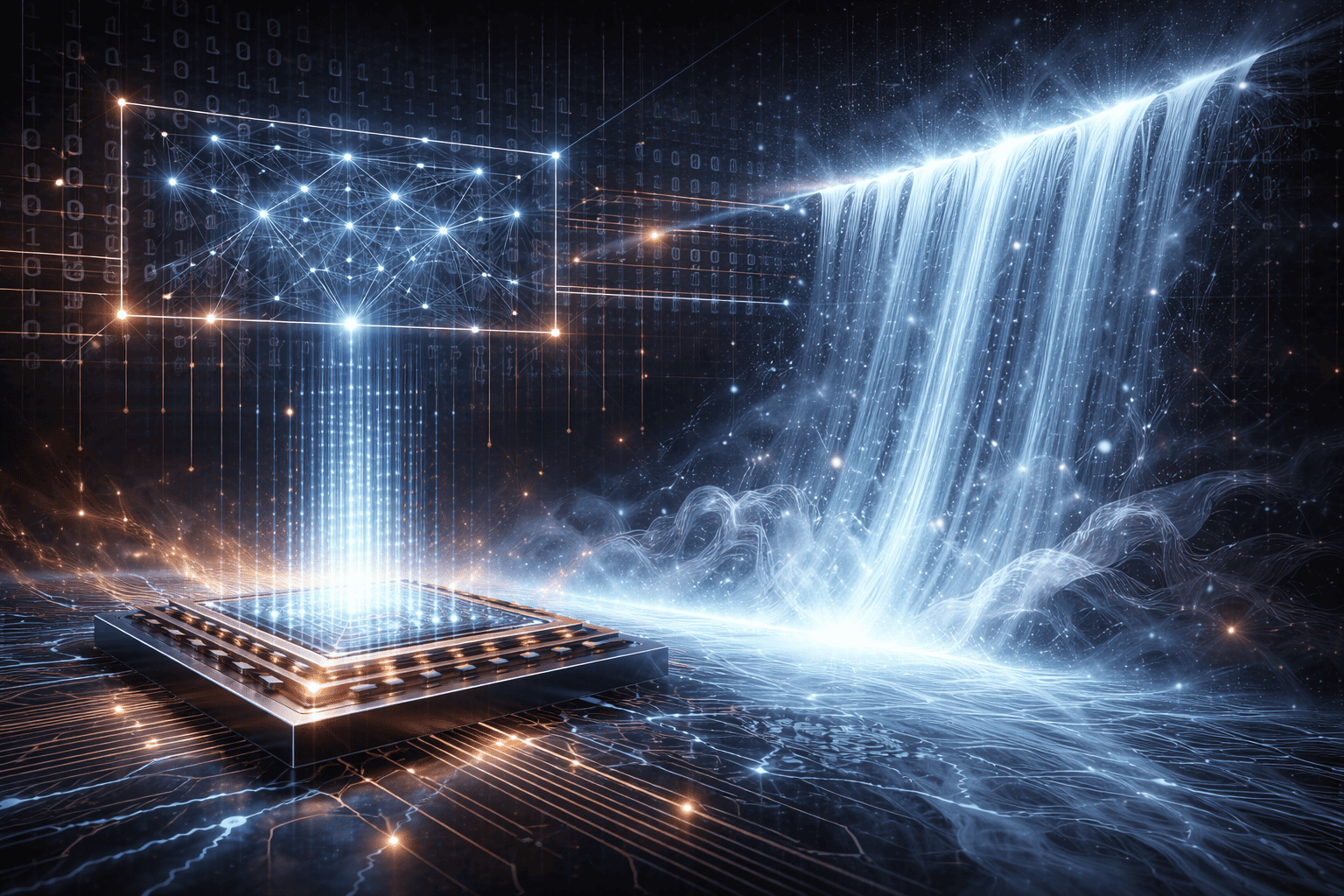

This research represents a significant leap forward in tackling quantum computing's biggest challenge: error rates that currently make large-scale quantum computers impractical for most applications. The Harvard team's neural network approach achieving up to 17× error reduction could dramatically accelerate the timeline for fault-tolerant quantum computing, where quantum computers become reliable enough for real-world problem solving. What makes this particularly exciting is the "waterfall effect" they describe, suggesting that AI-powered error correction doesn't just incrementally improve performance but can trigger cascading improvements across the entire quantum system. While still in pre-print stage, this work points toward a future where machine learning and quantum computing work symbiotically to overcome each other's limitations.

— Mark Eatherly

Summary

Insider Brief Don’t listen to TLC. When it comes to error correction, in fact, do go chasing waterfalls. A new study shows that artificial intelligence can unlock a “waterfall” effect in error correction, sharply reducing error rates and processing time. Researchers from Harvard University reported in the pre-print server arXiv that they developed a neural-network-based […]