Curator's Take

This article represents a potentially game-changing development for the practical timeline of quantum computing applications, as it suggests that near-term quantum devices could deliver exponential speedups for machine learning tasks without requiring the millions of qubits typically assumed necessary for quantum advantage. The collaboration between leading institutions like Caltech, Google Quantum AI, and MIT lends significant credibility to these findings, particularly given Google's recent breakthroughs with their Willow chip and the ongoing race to demonstrate quantum utility in real-world applications. If these theoretical results translate to practical implementations, it could dramatically accelerate the adoption of quantum computing in AI and data science, potentially making quantum-enhanced machine learning accessible years sooner than previously expected. The timing is particularly notable as the tech industry increasingly seeks computational advantages for processing the massive datasets required for modern AI systems.

— Mark Eatherly

Summary

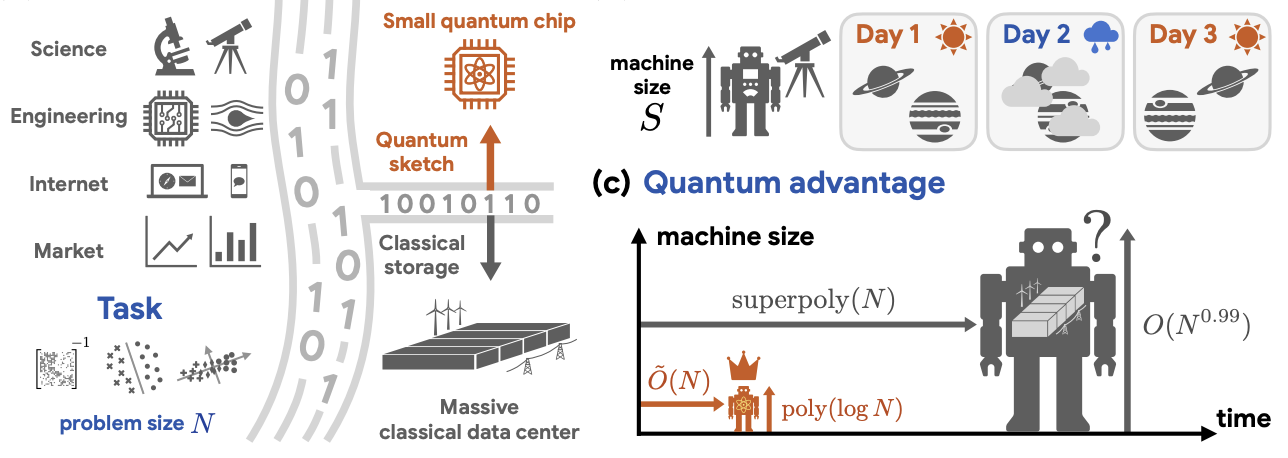

Insider Brief Small quantum computers could process massive datasets more efficiently than far larger classical systems, according to a study recently posted on arXiv that outlines a path to exponential gains in machine learning and data analysis. The study, conducted by researchers from Caltech, Google Quantum AI, MIT and and Oratomic, reports that quantum systems […]